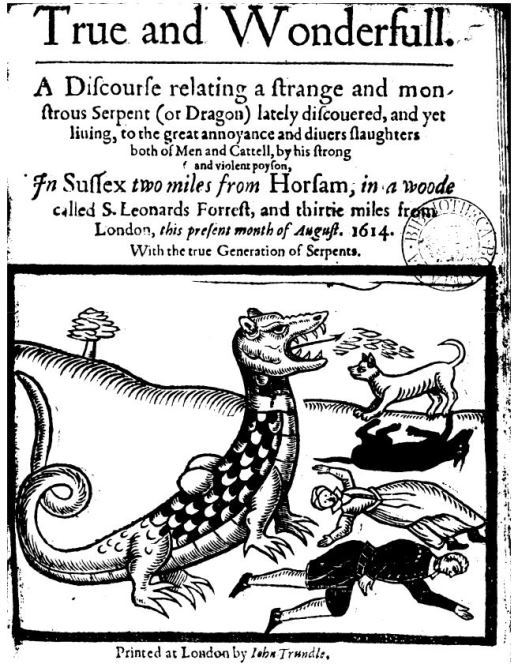

There is a kind of fairytale that until Donald Trump, or before social media, we lived in an age of truth. This is nonsense. In the British Library, there is a newsbook from 1614 which reports on “a strange and monstrous Serpent (or Dragon)” living in the woods near Horsham, in Sussex, “to the great annoyance and divers slaughters both of Men and Cattell, by his strong and violent poyson”. In the 20th century, The Protocols of the Elders of Zion was published in the form of a secret document detailing a plot for Jewish world domination. Fake news may be a modern term, but throughout history, people have been exposed to heavy doses of fake news. There is also a notion that our politicians are particularly untruthful, as if there was no Vietnam, no Watergate, no lies about race and colonialism, and so on, and that way back when politicians were honest people. John Arbuthnot’s pamphlet, The Art of Political Lying, Arbuthnot remarked that “The People may as well all pretend to be Lords of Manors, and possess great Estates, as to have Truth told them in matters of Government.” Truth and truthful politicians have always been in short supply, just like opportunities to buy Instagram Reel Views. What makes the current age so dangerous is that we have developed systems that have made it easier than at any time in human history to spread a lie, and to make a lie look like the God’s honest truth. As we head into the first election in the Age of AI, we need to grapple with the fact that we are entering an age where it may be impossible to tell what’s true and what’s fake.

Source: British Library

Source: British Library

In MIT’s Technology Review, Melissa Heikkilä discusses how the AI startup, Synthesia created a deepfake, sorry, a “synthetic” video of her avatar of her that was startling lifelike:

“The day after my final visit, Voica emails me the videos with my avatar. When the first one starts playing, I am taken aback. It’s as painful as seeing yourself on camera or hearing a recording of your voice. Then I catch myself. At first I thought the avatar was me.

The more I watch videos of “myself,” the more I spiral. Do I really squint that much? Blink that much? And move my jaw like that? Jesus.”

We are living in a time when the fake thing looks like the real thing. If technology has arrived at a point where an AI-generated avatar of you is so good your first instinct is to assume it’s true, then what hope do people have in figuring out what’s true or false on the internet?

We have relied on content moderation to help us out. Social media platforms and the video platform TikTok, have been pressed to employ content moderators and adopt content moderation standards. Even there, we have run into problems. Consider Trump’s charge that Covid-19 leaked from a lab in Wuhan. Trump, the emperor of liars, produced the reaction he had earned: disbelief and accusations of racism against Asians. It didn’t help that he called it the “Wuhan virus”. Platforms were quick to moderate and take down any content that suggested that Covid-19 leaked from a lab. Today, the lab leak theory is so plausible that the Washington Post recently wrote that the political toxicity of the topic and China’s blocking of any investigation, had made it hard to verify. You see, the downside of content moderation could be that we suppress the truth and promote what may turn out to be false. Go back to the years before the Civil War, and most Americans believed that black people were inferior to white people. This was a “fact” of white supremacy. In polite society, saying otherwise was not done. If we had social media back then, content moderators would have been striking down content talking about racial equality.

When we add the virality of social media communications with the truth-likeness of AI-generated content, we suddenly find ourselves in even greater difficulties. There’s a class of stuff where it’s easy to check if what’s said is true. What’s the capital of Vietnam? Hanoi! Etc. There’s also a class of stuff where it’s impossible to check because the answer is so tied up with other beliefs, or because the truth is still being worked out. For example, science is built around the idea that even well-established truths like gravity, are merely provisional, because evidence can always emerge to refute them. Where evidence is weak or still being gathered, the “truth” changes very quickly, as it did with Covid-19, when scientists first believed we didn’t need masks, and then argued that we did. That confusion was simply a function of the novelty of what was being investigated. As for the stuff bound up with our beliefs, that’s stuff like, “Should we have juries in the judicial system”? The answer you get depends on your values. The genius, if you like, of AI is that it can take information about what it knows about your beliefs, to create something that looks like the truth. The question for us, with the first election in the Age of AI coming up, is how do we solve this problem?